What is LLMInspect?

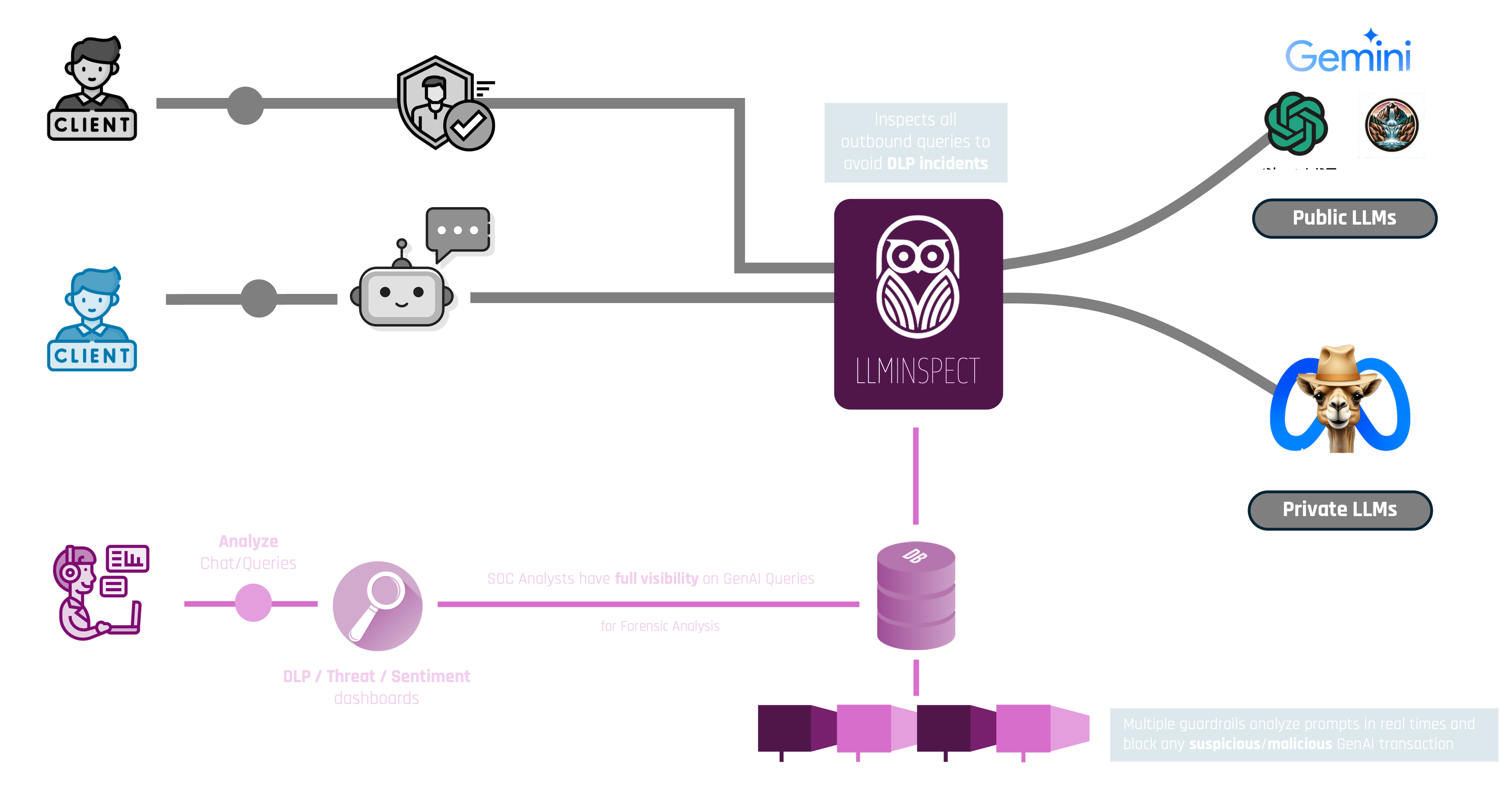

LLMInspect is a GenAI Gateway that sits between your users and any public or private LLM provider. It acts as a transparent proxy, inspecting every prompt and response in real time detecting sensitive content, enforcing policy guardrails, and logging activity for security teams.

LLMInspect does not change the user experience. Employees continue using their preferred AI tools, LLMInspect silently enforces your data security policies in the background.

How It Works

Core Features

- Unified Chat Interface: A familiar, intuitive chat GUI supporting Light and Dark modes no training required for end users.

- Multimodal Support: Upload and analyze images, leverage tool-calling agents, and trigger external API actions all within a single interface.

- Multi-Provider Model Selection: Connect to leading public and private LLM providers cloud-hosted or self-hosted. Switch models without leaving the platform.

- Enterprise Identity Integration: Supports LDAP, Active Directory, and OpenID for centralized user management and access control.

- Flexible Deployment: Deploy as a Proxy, Reverse Proxy, or Docker container on-premises, in a private cloud, or as a SaaS service.

- Rate Limiting & Routing: Apply per-user or per-group query limits. Automatically reroute public LLM traffic to internal private models when needed.

- Multilingual UI: Interface available in multiple languages to support global enterprise deployments.

- Conversation Export: Export chat history in Markdown, JSON, and other formats for audit and documentation purposes.

Guardrails Library

LLMInspect's Guardrails Library is a modular set of safety mechanisms that inspect every prompt and response in real time. Each guardrail can be enabled, configured, or combined independently giving security teams precise control over what is allowed to pass through the gateway.

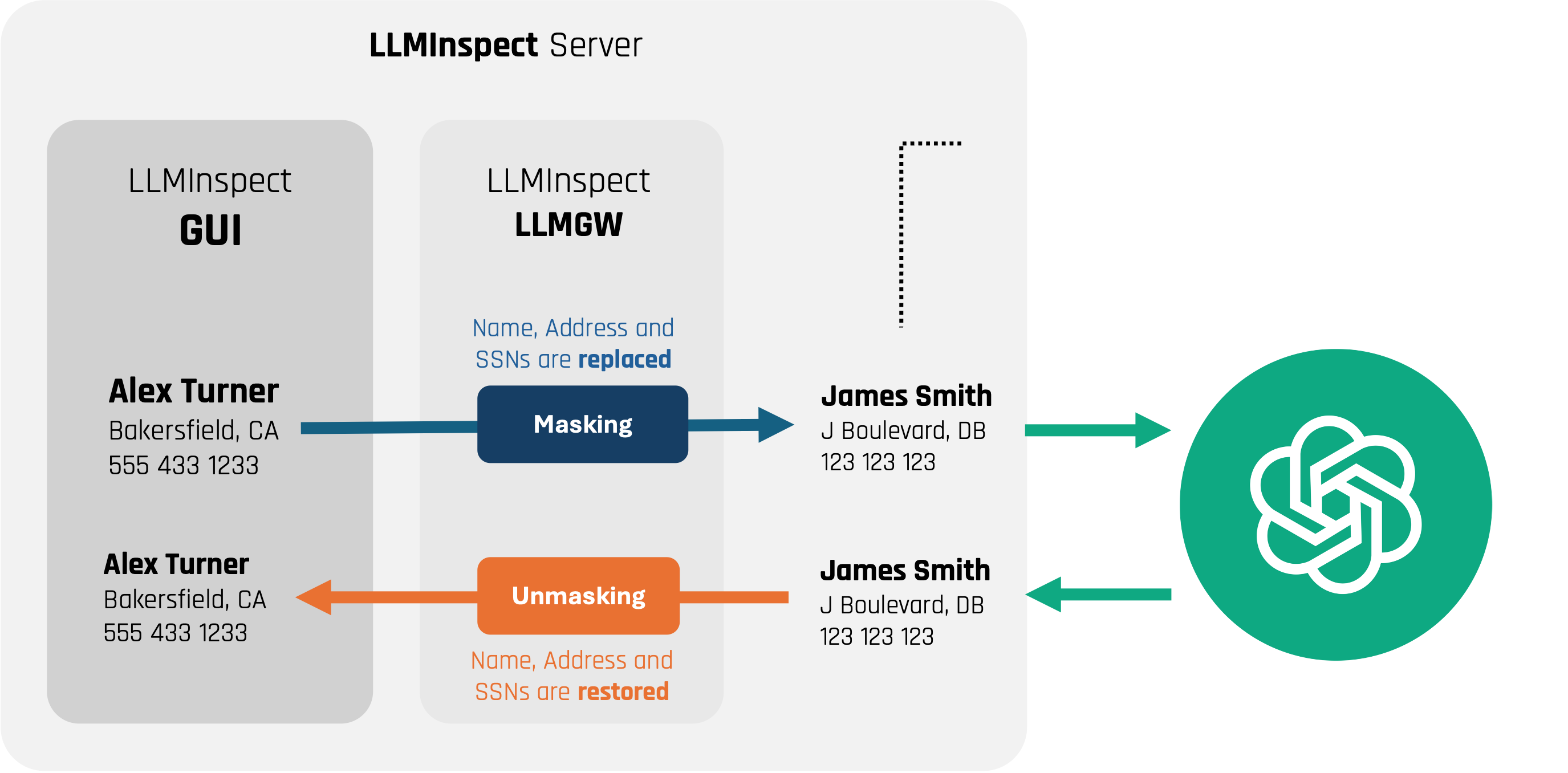

Data Sanitization

When LLMInspect detects sensitive data in a prompt, it does not simply block the request. Instead, it applies real-time data sanitization replacing sensitive values with realistic, anonymous aliases before the prompt is forwarded to the LLM.

This means employees can still get meaningful answers from the AI while the underlying sensitive data never leaves the enterprise boundary. Once the response is returned, the original values are restored in context keeping the experience seamless for the user.

Observability & Audit

LLMInspect is fully instrumented with an open-standard telemetry framework, providing deep visibility into every aspect of GenAI usage across the enterprise. Logs, traces, and metrics are exported to your existing SIEM or observability stack in real time.

- Usage Tracking: See which users, teams, and departments are using GenAI and how much.

- Threat Detection: SOC analysts can identify anomalous prompts, repeated policy violations, or targeted prompt injection campaigns.

- Compliance Audit Trails: Every interaction is logged with user identity, timestamp, model used, guardrails triggered, and sanitization applied.

- Cost Management: Monitor token consumption per user or group to manage GenAI operational costs effectively.

Compliance & Standards

LLMInspect is designed to help enterprises meet their AI governance obligations under current and emerging regulatory frameworks.

The full audit log, data sanitization evidence, and guardrail activity reports generated by LLMInspect can be used directly as supporting documentation in compliance assessments and regulatory reviews.